24/7

Operations context

Writing shaped by uptime, reliability, and high-stakes handoffs.

Gregory Cornelius turns hands-on data center experience into clear documentation, practical training support, workflow thinking, and platform concepts that help technical users do real work with confidence.

Cloud operations documentation

Workflow improvement proposals

SOPs, troubleshooting, and release notes

Audience-aware training and communication

AI-assisted revenue platform design

24/7

Writing shaped by uptime, reliability, and high-stakes handoffs.

8

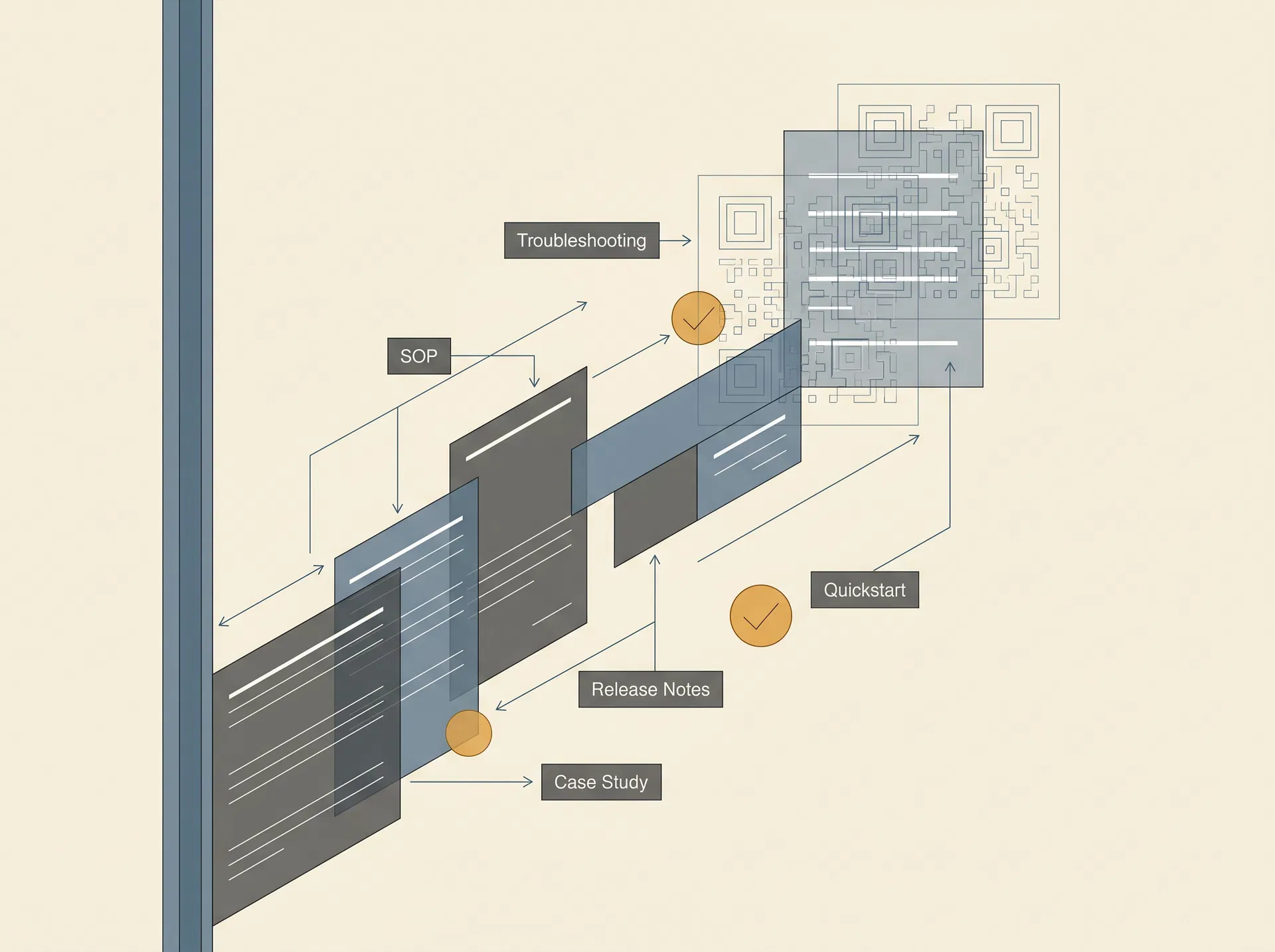

Proposal, SOP, guide, quickstart, release notes, case study, blog, and interview positioning.

3

Replit, Emergent, and Manus used for AI-assisted product and platform development.

About Gregory Cornelius

Operator-minded writer with builder range.

The photo now lives inside the About Me section because this is not a detached résumé portrait. It is part of the story: technical operations, communication judgment, and platform-building initiative.

Gregory currently works as a DCO Tech for AWS. That background matters because strong technical services depend on understanding how systems, tools, people, training needs, and process controls actually meet in the field.

A Data Center Operations Technician supports physical installation, maintenance, troubleshooting, and monitoring of servers, networking equipment, and infrastructure. In that environment, documentation, training, and practical service guidance are not decorative. They help protect uptime, security, reliability, and clear decisions in 24/7, high-stakes operations.

Current role

Gregory currently works as a DCO Tech for AWS, bringing hands-on data center operations experience into the way he understands technical systems, process controls, and documentation users.

Operational judgment

A Data Center Operations Technician works close to the physical installation, maintenance, troubleshooting, and monitoring of servers, networking equipment, and critical infrastructure.

Builder skill set

Gregory is trained and proficient in AI and Vibe Coding, using Replit, Emergent, and Manus to design and develop custom revenue-generating applications and platforms.

The portfolio shows how Gregory moves between strategic proposals, precise procedures, support documentation, training-oriented guidance, release communication, platform building, and technical storytelling while keeping the same core value: make technical work easier to perform and support.

Frames problems, tradeoffs, risk controls, adoption plans, and operational value.

Turns field knowledge into repeatable procedures that teams can follow under pressure.

Guides users from symptoms to corrective action with calm, structured support language.

Connects technicians, security teams, managers, support partners, and builders around shared process clarity.

Prepared by: Gregory Cornelius

Email: [email protected]

Phone: (334)718-3911

Target roles: Technical Services Specialist, Training Specialist, Technical Writer, Cloud Documentation Specialist, Knowledge Base Writer, and Operations Documentation Specialist

Portfolio theme: Clear, practical technical services communication for cloud operations, data center workflows, technical process improvement, training support, and user enablement.

Portfolio note: The writing samples in this portfolio are fictionalized and sanitized. They are based on hands-on operational experience with data center workflow support, asset movement, checkpoint validation, technical troubleshooting, and process improvement. They do not disclose internal systems, ticket identifiers, restricted workflows, customer information, or proprietary procedures.

My writing style comes from working close to the operation. As a data tech, I have seen how documentation affects the people doing the work: technicians trying to complete a process correctly, security teams validating movement, builders waiting for approval, and support teams trying to keep a workflow consistent across shifts. Good documentation does not just explain a tool. It reduces hesitation, prevents avoidable errors, and helps the next person complete the task the right way.

I am using my operations background as the foundation for a broader technical services path that includes training, documentation, workflow support, and technical communication. My strength is translating field experience into usable guidance. I can write for the person who needs a fast answer at a checkpoint, the manager who needs a clean proposal, the new hire who needs a clear first walkthrough, and the engineering partner who needs accurate change communication.

This portfolio intentionally uses different service and communication styles. Some samples are direct and operational. Others are more polished, instructional, narrative, or conversational. The goal is to show range while keeping the same core value: technical guidance should make work easier to perform, easier to support, and easier to improve.

| Sample | Documentation type | Tone | Primary audience | Skill demonstrated |

|---|---|---|---|---|

| 1 | Workflow improvement proposal | Direct and business-focused | Operations managers, program owners, site leadership | Problem framing, implementation planning, stakeholder awareness |

| 2 | Standard operating procedure | Precise and procedural | Technicians, security officers, operations staff | Step-by-step instruction, validation, escalation guidance |

| 3 | Troubleshooting guide | Calm and diagnostic | Support teams, new technicians, field users | Issue isolation, likely causes, corrective action |

| 4 | End-user quickstart | Friendly and educational | New cloud users, junior technicians, internal learners | Beginner-friendly explanation and task completion |

| 5 | Release notes and change communication | Concise and impact-focused | Cross-functional stakeholders | Change summary, action required, rollout messaging |

| 6 | Case study | Narrative and outcome-focused | Hiring managers, operations leaders, documentation teams | Storytelling, measurable impact, process reflection |

| 7 | Technical blog article | Conversational and thought-leadership oriented | Technical readers, documentation teams, hiring managers | Voice flexibility, insight, audience engagement |

| 8 | Interview positioning notes | Persuasive and career-focused | Recruiters and hiring teams | Portfolio framing and transition narrative |

The samples show a technical services professional who can move from field instructions to training guidance, platform concepts, and leadership-ready proposals without losing clarity.

This section makes Gregory’s service range explicit for hiring managers, project leads, and collaborators. Each category connects his data center operations experience with technical documentation, training support, platform-building initiative, and process improvement judgment.

Best fit: roles and projects that need a practical technical communicator who can document systems, support training, clarify workflows, and help turn platform ideas into usable experiences.

Document

Get clear, field-ready documentation that helps teams follow the right steps, reduce rework, and keep critical knowledge accessible.

Transforms operational knowledge into procedures, troubleshooting guides, quickstarts, release notes, and reference material that technicians and stakeholders can use without guessing.

SOPs · troubleshooting guides · quickstarts · release notes

Teach

Turn complex work into practical training resources that help new and existing team members learn faster and perform with confidence.

Builds onboarding materials, instructional content, operator support guides, and training pathways that help technical teams learn the process and apply it in real conditions.

onboarding content · job aids · lesson outlines · learner handoffs

Build

Move from idea to usable digital experience with structured platform thinking, clear user flows, and practical AI-assisted build support.

Uses no-code, AI-assisted, and vibe-coding workflows to shape platform concepts, user journeys, product documentation, launch copy, and revenue-focused digital experiences.

platform concepts · product docs · launch copy · user flows

Improve

Identify what slows teams down, clarify the process, and create practical guidance that makes everyday execution smoother.

Studies gaps between people, tools, and processes, then turns that analysis into practical proposals, process maps, service guidance, and cross-functional communication.

gap analysis · process proposals · service playbooks · stakeholder briefs

This area presents no-code and AI-assisted platform-building experience as evidence of product thinking, user enablement, service design, and documentation that supports revenue-generating workflows.

Résumé download

Download Gregory Cornelius’s résumé for technical services, training, and technical communication opportunities, now connected to the attached PDF.

Download PDFPlatform portfolio

A dedicated pathway for live app links, platform demos, private walkthroughs, and case studies.

Review platformsReplit · Emergent · Manus

Designed and assembled practical platform concepts that connect audience needs, workflow logic, offer structure, and monetization paths into usable digital products.

Outcome: created a visitor-ready marketing platform that turns service positioning, offer clarity, and lead pathways into one accessible live destination.

Workflow design · AI-assisted build tools · Documentation

Used no-code and AI-assisted builders to move from process idea to working prototype, then documented how users should understand, operate, and improve the platform.

Outcome: converted process ideas into structured operating environments that make repeatable tasks easier to explain, follow, and improve.

Product framing · User guidance · Launch copy

Created platform structures where instructions, templates, onboarding copy, and user flows support commercial use rather than existing as isolated documentation assets.

Outcome: shaped knowledge assets into guided product experiences that can support onboarding, user confidence, and future revenue pathways.

Each sample is sanitized and fictionalized, but grounded in data center workflow support, asset movement validation, troubleshooting, and process improvement experience.

Process improvement proposal

Direct, practical, business-focused

Standard operating procedure

Precise, instructional, compliance-aware

Knowledge base article

Calm, diagnostic, support-oriented

End-user technical guide

Friendly, educational, beginner-aware

Release notes and stakeholder announcement

Concise, cross-functional, impact-focused

Operational case study

Narrative, reflective, outcome-focused

Technical blog article

Conversational, reflective, thought-leadership oriented

Career positioning copy

Persuasive, polished, recruiter-friendly

Documentation type: Process improvement proposal

Voice demonstrated: Direct, practical, business-focused

Audience: Operations managers, checkpoint leads, training coordinators, and workflow owners

Checkpoint teams experience delays when users must manually navigate to the correct validation workflow before approving asset movement. The delay is usually small for experienced users, but it becomes more noticeable when a security officer is newly trained, covering a different post, or using the workflow after a long gap between sessions.

The issue is not a lack of effort from the checkpoint team. The issue is that the access path depends too much on memory. When a process is time-sensitive, the entry point should be simple, visible, and consistent. If the user has to search for the correct tool before the validation can even begin, the process is already slower than it needs to be.

Place approved QR codes at each checkpoint workstation that link directly to the correct validation workflow. The QR code would not replace the existing access method. It would provide a faster, standardized entry point for users who need to reach the workflow quickly.

This approach keeps the process simple. The officer scans the code, confirms they are on the correct workflow page, and begins validation. The team does not need to memorize a navigation path or rely on another user to locate the workflow.

| Benefit | Current challenge | Improvement expected |

|---|---|---|

| Faster access | Users may spend time locating the correct workflow. | QR access sends users directly to the correct starting point. |

| Reduced training burden | New or infrequent users must remember navigation steps. | Training can focus on validation quality instead of menu navigation. |

| Fewer wrong-workflow errors | Users may open an outdated or incorrect page. | Standardized links reduce access variation. |

| Better shift consistency | Process knowledge can vary by shift or location. | The same physical access point supports the same workflow every time. |

| Improved user experience | Builders and technicians wait while the workflow is located. | Checkpoint validation begins with less friction. |

The rollout should begin with a controlled pilot at one checkpoint before expanding to all locations. The pilot should validate that the QR code opens the correct workflow, works on approved devices, and does not bypass any required authentication or authorization step.

| Requirement | Owner | Validation method |

|---|---|---|

| Generate approved QR code | Workflow owner or authorized support team | Confirm link destination and access behavior. |

| Place laminated QR code at workstation | Site operations or checkpoint lead | Confirm visibility from the normal work position. |

| Update training material | Documentation owner | Add QR access as an approved access method. |

| Notify affected teams | Operations lead | Send rollout message with effective date and support path. |

| Review after pilot | Program owner | Compare access time, user feedback, and error reports. |

The main risk is that a QR code could become outdated if the workflow URL changes. To control that risk, the QR code should point to a maintained redirect or approved landing page when possible. If a direct workflow link must be used, the code should have an owner and a review schedule.

A second risk is that users may assume the QR code replaces training. It should not. The QR code only improves access. Users still need to understand what they are validating, what information must match, and when to escalate.

The proposal should be considered successful if checkpoint users can reach the correct workflow without manual navigation, new users can complete access with minimal coaching, and no increase appears in validation errors after rollout. The best outcome is a faster process that stays controlled, auditable, and easy to support.

Documentation type: Standard operating procedure

Voice demonstrated: Precise, instructional, compliance-aware

Audience: Security officers, data center technicians, and operations support staff

This procedure explains how to validate asset movement at a controlled checkpoint when a technician or builder is transporting a part, device, or component out of a restricted work area. The goal is to confirm that the asset has a valid workflow record, the person transporting it is authorized, and the checkpoint decision is recorded consistently.

This SOP applies to routine checkpoint validation for approved asset movement. It does not apply to emergency removal, incident response, law enforcement requests, or any situation where the asset record is missing and cannot be verified through the normal workflow.

Before beginning validation, confirm that you have access to the approved validation workflow, your device is connected to the required network, and you are signed in with your assigned credentials. Do not use another person's session. If the workflow is unavailable, follow the escalation path instead of approving movement manually.

| Required item | Why it matters |

|---|---|

| Approved workflow access | Ensures the validation is recorded in the correct system. |

| User identification | Confirms the person transporting the asset can be tied to the request. |

| Asset identifier or ticket reference | Allows the checkpoint team to match the physical asset to the workflow record. |

| Current checkpoint instructions | Ensures the officer follows the latest site-specific requirements. |

Ask the technician or builder to provide the asset identifier, ticket reference, or approved workflow record. The checkpoint should not begin with assumptions about the asset status.

Open the approved validation workflow using the standard access method for the checkpoint. If a QR code or shortcut is provided, confirm that it opens the expected workflow before proceeding.

Search for the asset record using the identifier provided. If multiple records appear, use the most specific matching information available, such as part type, request status, timestamp, or assigned owner.

Compare the workflow record to the physical asset. Confirm that the asset type, visible label, custody status, and removal reason match the request.

Confirm that the person transporting the asset is the person listed in the workflow record or is otherwise authorized by the process. If the person does not match the record, pause the validation and escalate.

Approve or deny movement according to the workflow status. Do not approve movement if the record is incomplete, expired, assigned to another person without authorization, or missing required details.

Record the checkpoint action in the workflow. Include the checkpoint location, validation result, and any exception notes required by the process.

Return the asset to the technician or builder only after the workflow has been updated. If movement is denied, explain the next required step without making informal exceptions.

If the workflow is unavailable, the asset record cannot be located, or the user cannot provide the required identifier, stop the normal process and escalate to the designated support contact. The checkpoint should not create an unofficial workaround unless the site has an approved downtime procedure.

| Exception | Required action | Do not do this |

|---|---|---|

| Workflow will not load | Escalate to workflow support or site operations. | Do not approve based only on verbal confirmation. |

| Asset record is missing | Ask the technician to confirm the reference and recheck the workflow. | Do not create a new record on behalf of the user unless authorized. |

| Person does not match record | Pause movement and contact the listed owner or support path. | Do not transfer custody informally. |

| Asset details do not match | Deny movement until the record is corrected. | Do not edit the record to force a match without approval. |

The process is complete when the workflow shows the checkpoint decision, the asset status reflects the correct movement result, and the technician or builder has been informed of the decision. A complete validation should leave enough information for another authorized user to understand what was approved, denied, or escalated.

Documentation type: Knowledge base article

Voice demonstrated: Calm, diagnostic, support-oriented

Audience: Checkpoint users, operations support, and newly trained technicians

Use this guide when an asset validation workflow is taking longer than expected, the record cannot be found, or the checkpoint user is unsure why the asset is not ready for movement. The purpose is to isolate the issue quickly without skipping required controls.

A delay does not always mean the system is down. In many cases, the record is still in the wrong status, the user is searching with the wrong identifier, or the request was completed in one workflow but not passed to the checkpoint validation queue.

| Symptom | Most likely cause | First action | Escalate if |

|---|---|---|---|

| Record cannot be found | Wrong identifier, incomplete ticket, or record not submitted | Confirm the asset ID and request reference with the technician. | The identifier is correct but still produces no result. |

| Record appears but cannot be approved | Status is pending, expired, or assigned to another owner | Review the workflow status and required next step. | The status appears incorrect or conflicts with the physical process. |

| Workflow loads slowly | Network latency, browser session issue, or service degradation | Refresh once, confirm network access, and retry in a clean session. | Multiple users report the same issue. |

| QR code opens the wrong page | Outdated code or changed workflow path | Use the manual access path and report the QR code. | More than one checkpoint has the same issue. |

| User is authorized but not listed | Custody transfer not completed | Ask the user to complete or update the transfer step. | The original owner is unavailable or the process is blocked. |

Start by confirming the basics. Ask for the exact asset identifier or ticket reference and enter it carefully. If the record does not appear, try one alternate approved identifier. Avoid repeated random searches because they slow the checkpoint and increase the chance of selecting the wrong record.

If the record appears, read the status before making a decision. A record that exists is not automatically approved for movement. The workflow status should clearly show that the asset is ready for checkpoint validation. If the status is pending or assigned to another person, the correct action is to pause and correct the workflow, not approve the asset based on conversation.

If the workflow itself is slow or unavailable, check whether the issue affects only one user or multiple users. A single-user issue may be caused by a browser session, device state, or network connection. A multi-user issue may indicate a broader service problem and should be escalated with the time, location, screenshots if permitted, and exact error message.

A good escalation saves time because support does not have to ask for the same information later. Include the checkpoint location, time of issue, asset identifier, workflow status, user role, error message, and whether the issue affects one user or multiple users.

Escalation example: “At 14:20 local time, checkpoint team could not locate asset record ABC-123 using the approved validation workflow. Technician provided ticket reference TCK-456. Manual search by asset ID and ticket reference returned no result. Issue observed by two users on separate devices.”

The issue is resolved when the asset record is visible, the workflow status is clear, the checkpoint action can be recorded, and the user understands whether movement is approved, denied, or pending correction.

Documentation type: End-user technical guide

Voice demonstrated: Friendly, educational, beginner-aware

Audience: Junior cloud users, operations technicians learning cloud documentation, and internal enablement teams

This quickstart explains how to plan a simple lifecycle rule for archived log files stored in Amazon S3. The goal is not to cover every storage design choice. The goal is to show how a user can think through retention, transition, and expiration before creating a rule.

Amazon S3 Lifecycle configurations use rules to define actions that Amazon S3 applies to objects over time. These actions can include transitioning objects to another storage class or expiring objects when they are no longer needed.[1] That makes lifecycle rules useful for log archives, temporary exports, and other data that has a predictable retention period.

A small operations team stores exported application logs in an S3 bucket. The team needs the logs available for recent troubleshooting, but older logs are rarely accessed. The team wants a documented retention plan before applying any lifecycle rule.

| Requirement | Decision for this quickstart |

|---|---|

| Data type | Application log exports |

| Recent access period | Keep immediately available for 30 days |

| Archive period | Move older logs to lower-cost storage after 30 days |

| Final retention | Delete after 365 days if no legal hold applies |

| Safety check | Test on a prefix before applying broadly |

Before creating a lifecycle rule, confirm the data owner, retention requirement, compliance restrictions, and recovery expectations. A lifecycle rule can reduce manual cleanup, but it should not be used as a shortcut for unclear data governance.

If the data may be needed for audit, legal, or customer-impact investigation, confirm the retention period with the right owner first. It is easier to delay a cleanup rule than to recover data that should not have expired.

Identify the bucket and prefix that contain the archived logs. For example, the team may choose a prefix such as logs/app-a/archive/ instead of applying the rule to the entire bucket.

Define the transition timing. In this example, logs remain in their current storage class for 30 days so the team can access recent files quickly.

Define the expiration timing. In this example, logs expire after 365 days because the team does not need routine access beyond one year.

Apply the rule to a test prefix first. Use a small sample set so the team can confirm the rule behaves as expected before applying it to production data.

Document the rule owner, business reason, transition timing, expiration timing, and review date. A lifecycle rule should be understandable six months later by someone who did not create it.

| Field | Example value |

|---|---|

| Rule name | archive-app-a-logs-after-30-days |

| Bucket area | logs/app-a/archive/ |

| Business owner | Operations analytics team |

| Transition action | Move objects after 30 days |

| Expiration action | Expire objects after 365 days |

| Review date | Quarterly or after application logging changes |

| Rollback plan | Disable rule and review affected prefix before reapplying |

After the rule is created, confirm that it applies only to the intended prefix, does not conflict with other lifecycle rules, and is visible in the bucket lifecycle configuration. The rule should also be documented in the team’s runbook or data retention inventory.

Lifecycle rules are technical settings, but they are also operational commitments. A clear rule tells future users what data is being managed, why it is being managed, and when it will no longer be available. That is the difference between automation that helps the team and automation that creates confusion later.

Documentation type: Release notes and stakeholder announcement

Voice demonstrated: Concise, cross-functional, impact-focused

Audience: Security officers, operations teams, training coordinators, support teams, and site leadership

A new checkpoint access method is being introduced to help users open the approved asset validation workflow faster and more consistently. Approved QR codes will be placed at checkpoint workstations and will link users to the current validation workflow.

This update does not change the approval requirements for asset movement. It only changes how users access the workflow.

| Area | Previous experience | Updated experience |

|---|---|---|

| Workflow access | Users manually navigate to the validation workflow. | Users may scan the approved QR code at the checkpoint. |

| Training | Training includes the manual navigation path. | Training includes both manual navigation and QR access. |

| Support | Users report workflow access issues through the existing support path. | Users continue to use the same support path and should include QR location if reporting a code issue. |

| Approval process | Asset movement is approved only through the required workflow. | No change. Approval requirements remain the same. |

Security officers and checkpoint users are the primary users affected by this change. Data center technicians and builders may experience faster checkpoint processing, but they do not need to take a new action unless instructed by the checkpoint team.

Training coordinators should update onboarding material to include QR access as an approved method. Support teams should be prepared to identify whether an issue is related to workflow access, QR placement, or the validation process itself.

Checkpoint leads should confirm that QR codes are installed in visible locations and that each code opens the correct workflow. Training owners should update local job aids and remove outdated screenshots where applicable. Users should continue to follow the normal validation process after opening the workflow.

If a QR code does not open the correct workflow, use the manual access path and report the issue. The report should include the checkpoint location, the time observed, the device type if relevant, and a short description of the page that opened.

This update is intended to reduce access friction while preserving the same validation controls. The success of the change should be measured by user feedback, reduced access-related delays, and no increase in validation exceptions.

Documentation type: Operational case study

Voice demonstrated: Narrative, reflective, outcome-focused

Audience: Hiring managers, documentation leaders, operations managers, and process improvement teams

In a controlled operations environment, a validation workflow is only useful if the right users can reach it quickly and use it correctly. The process may be technically sound, but if the access path is unclear, the field experience still suffers. That gap is where documentation and process design overlap.

The team observed that checkpoint validation sometimes slowed down before the actual review began. The delay was not caused by the validation requirements themselves. It was caused by the steps users had to take to find the correct workflow, especially when they were new to the process or covering a checkpoint temporarily.

The process had three competing needs. It needed to be fast enough for a live checkpoint environment, controlled enough to protect asset movement, and simple enough for users with different levels of experience. A solution that only improved speed but weakened validation would not be acceptable. A solution that added more training but no practical access improvement would not solve the root problem.

| Need | Why it mattered |

|---|---|

| Speed | Checkpoint users needed to begin validation without unnecessary navigation delay. |

| Control | Asset movement still required proper workflow review and recorded approval. |

| Consistency | Users across shifts needed the same starting point and the same instructions. |

| Supportability | The solution needed a clear owner, update path, and escalation method. |

The proposed solution was to add a visible, approved QR code at the checkpoint that opened the validation workflow directly. The documentation approach was just as important as the technical access method. The change needed a short proposal, a user-facing job aid, updated training language, and a support note explaining how to report an outdated or incorrect code.

This kept the solution lightweight. It did not require users to learn a new system. It did not remove the existing manual path. It simply made the correct starting point easier to reach.

The expected result was a smoother checkpoint experience with less dependence on memory and informal coaching. For new users, the QR code provided a clear first step. For experienced users, it reduced repetitive navigation. For support teams, it created a more consistent access point to troubleshoot.

The larger lesson is that documentation is not only the page users read after a tool is built. Documentation can be part of the workflow design. A well-placed job aid, a clear access method, and a concise support path can remove friction without changing the underlying system.

This case study demonstrates how I approach documentation from the field user’s perspective. I look for the point where confusion starts, identify who is affected, propose a practical fix, and write the supporting content needed to make the change usable. That combination of operational awareness and writing discipline is the value I would bring to a technical writing role.

Documentation type: Technical blog article

Voice demonstrated: Conversational, reflective, thought-leadership oriented

Audience: Technical writers, operations teams, cloud support teams, and hiring managers

Technical documentation is often judged by how complete it is. Completeness matters, but it is not the full measure of whether documentation works. The real test is what happens when a user is under time pressure, standing at a workstation, trying to complete a task without making a mistake.

That is where my view of documentation comes from. In operations work, the user does not always have time to read a long article from the beginning. They need the right answer in the right place, written in language that matches the task. If the document is accurate but too hard to use, the process still breaks down.

Good technical writing starts with respect for the user’s situation. A checkpoint officer may need a quick validation path. A technician may need to know whether a part can move to the next step. A support engineer may need the exact error message and workflow status before they can help. Each user needs different information, but they all need clarity.

| User situation | Documentation that helps |

|---|---|

| New user learning a process | A quickstart with screenshots, prerequisites, and success criteria. |

| Experienced user completing a routine task | A short SOP with clear decision points. |

| User blocked by an error | A troubleshooting guide organized by symptom and likely cause. |

| Manager approving a change | A proposal that explains the problem, impact, risk, and rollout plan. |

| Cross-functional team preparing for rollout | Release notes that explain what changed and what action is required. |

Cloud and data center environments both depend on reliable documentation. Amazon CloudWatch alarms, for example, can watch metrics and send notifications or take actions when thresholds are reached.[2] That capability is technical, but users still need documentation that explains what the alarm means, who owns the response, and what to do when it triggers. The setting alone is not enough. The workflow around the setting is what makes it operational.

The same principle applies to data retention. Amazon S3 lifecycle rules can transition or expire objects over time.[1] A lifecycle rule can be configured in the console, but a good document explains why the rule exists, what data it affects, who approved it, and when it should be reviewed. Technical accuracy and operational context need to work together.

That is the kind of technical writing I want to do. I want to write documents that are accurate enough for technical teams, practical enough for field users, and clear enough that someone can follow them without needing the original author in the room.

Documentation type: Career positioning copy

Voice demonstrated: Persuasive, polished, recruiter-friendly

Audience: Recruiters, hiring managers, documentation leads, and interview panels

This portfolio shows how I translate hands-on technical operations experience into clear documentation. My background as a data tech gives me direct experience with field workflows, asset movement, support escalation, and process improvement. I use that experience to write documentation that helps users complete tasks correctly, reduces avoidable delays, and supports consistent execution across teams.

If asked why I am moving from data tech work into technical writing, I would explain it this way:

“In my operations work, I kept seeing how much documentation affects execution. When the documentation is clear, people move faster and make fewer mistakes. When it is unclear, even a good process becomes harder to follow. I started paying attention to the gap between the system design and the user experience. Technical writing is a natural transition because I can use my field experience to write documentation that is practical, accurate, and useful for the people doing the work.”

| Strength | How to explain it |

|---|---|

| Field experience | “I understand how documentation is used in live operations, not just how it looks in a repository.” |

| Process improvement mindset | “I can identify where users get blocked and turn that into clearer documentation or a better workflow.” |

| Audience awareness | “I can write for technicians, security users, managers, and support teams without using the same tone for every audience.” |

| Technical credibility | “I am comfortable with cloud and data center concepts, and I know how to ask the right questions when documenting a technical process.” |

| Practical writing style | “My writing is direct, structured, and focused on helping users complete the task.” |

The workflow proposal demonstrates that I can identify an operational problem, explain its impact, and recommend a realistic solution. The SOP and troubleshooting guide show that I can write structured documentation for repeatable tasks and support scenarios. The quickstart shows that I can explain a cloud concept to a less experienced user. The release notes show that I can communicate change across teams. The case study and blog article show that I can tell a technical story and explain why documentation matters.

I am not approaching technical writing only as someone who likes to write. I am approaching it as someone who has worked inside technical operations and understands what users need from documentation. I can bring practical judgment, clear writing, and a strong sense of user impact to a technical writing team.

This portfolio can be adjusted depending on the role. For a developer documentation role, the quickstart can be expanded with command-line examples, API references, or configuration snippets. For a cloud documentation role, the S3 lifecycle sample and monitoring discussion can be expanded into a larger cloud operations guide. For an internal knowledge-base role, the SOP, troubleshooting guide, and release notes should be emphasized.

| Target role | Samples to lead with | Suggested emphasis |

|---|---|---|

| Technical Writer | SOP, troubleshooting guide, release notes | Clarity, structure, audience adaptation |

| Developer Documentation Writer | Quickstart, technical blog, troubleshooting guide | Technical learning, examples, accuracy |

| Cloud Documentation Specialist | S3 lifecycle quickstart, CloudWatch discussion, SOP | Cloud concepts, operational context, supportability |

| Knowledge Base Writer | Troubleshooting guide, SOP, release notes | Searchable answers, support workflows, issue resolution |

| Process Documentation Specialist | Workflow proposal, case study, SOP | Operational improvement, rollout planning, controls |

I recommend introducing the portfolio with a short note when sending it to recruiters or hiring managers:

“I created this portfolio to show how my data tech experience translates into technical writing. The samples are sanitized and fictionalized, but they reflect the kind of work I have been close to: operational workflows, asset validation, troubleshooting, user enablement, and cloud process documentation. I included multiple formats to show that I can adapt my voice for proposals, SOPs, quickstarts, support articles, release notes, case studies, and technical blog content.”

Use the form for technical services roles, training opportunities, platform conversations, résumé follow-ups, or project questions. The form validates your details first, then prepares a ready-to-send email to Gregory.

Validation built in: name, email format, topic, and message context are checked before sending.

Static-site friendly: the message opens in the visitor’s email app with the subject and body already filled in.